Robots are not ready for the real world. It’s still an achievement for autonomous robots to merely survive in the real world, which is a long way from any kind of useful generalized autonomy. Under some fairly specific constraints, autonomous robots are starting to find a few valuable niches in semistructured environments, like offices and hospitals and warehouses. But when it comes to the unstructured nature of disaster areas or human interaction, or really any situation that requires innovation and creativity, autonomous robots are often at a loss.

For the foreseeable future, this means that humans are still necessary. It doesn’t mean that humans must be physically present, however—just that a human is in the loop somewhere. And this creates an opportunity.

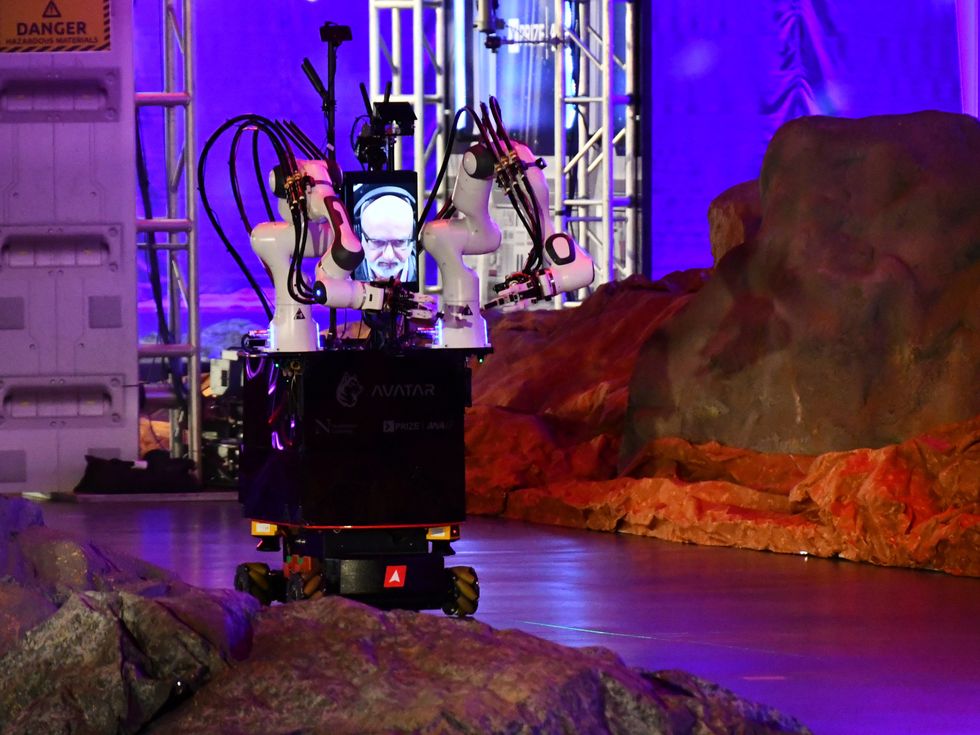

In 2018, the XPrize Foundation announced a competition (sponsored by the Japanese airline ANA) to create “an avatar system that can transport human presence to a remote location in real time,” with the goal of developing robotic systems that could be used by humans to interact with the world anywhere with a decent Internet connection. The final event took place last November in Long Beach, Calif., where 17 teams from around the world competed for US $8 million in prize money.

While avatar systems are all able to move and interact with their environment, the Avatar XPrize competition showcased a variety of different hardware and software approaches to creating the most effective system.XPrize Foundation

While avatar systems are all able to move and interact with their environment, the Avatar XPrize competition showcased a variety of different hardware and software approaches to creating the most effective system.XPrize Foundation

The competition showcased the power of humans paired with robotic systems, transporting our experience and adaptability to a remote location. While the robots and interfaces were very much research projects rather than systems ready for real-world use, the Avatar XPrize provided the inspiration (as well as the structure and funding) to help some of the world’s best roboticists push the limits of what’s possible through telepresence.

A robotic avatar

A robotic avatar system is similar to virtual reality, in that both allow a person located in one place to experience and interact with a different place using technology as an interface. Like VR, an effective robotic avatar enables the user to see, hear, touch, move, and communicate in such a way that they feel like they’re actually somewhere else. But where VR puts a human into a virtual environment, a robotic avatar brings a human into a physical environment, which could be in the next room or thousands of kilometers away.

ANA Avatar XPRIZE Finals: Winning team NimbRo Day 2 Test Runyoutu.be

The XPrize Foundation hopes that avatar robots could one day be used for more practical purposes: providing care to anyone instantly, regardless of distance; disaster relief in areas where it is too dangerous for human rescuers to go; and performing critical repairs, as well as maintenance and other hard-to-come-by services.

“The available methods by which we can physically transport ourselves from one place to another are not scaling rapidly enough,” says David Locke, the executive director of Avatar XPrize. “A disruption in this space is long overdue. Our aim is to bypass the barriers of distance and time by introducing a new means of physical connection, allowing anyone in the world to physically experience another location and provide on-the-ground assistance where and when it is needed.”

Global competition

In the Long Beach convention center, XPrize did its best to create an atmosphere that was part rock concert, part sporting event, and part robotics research conference and expo. The course was set up in an arena with stadium seating (open to the public) and extensively decorated and dramatically lit. Live commentary accompanied each competitor’s run. Between runs, teams worked on their avatar systems in a convention hall, where they could interact with each other as well as with curious onlookers. The 17 teams hailed from France, Germany, Italy, Japan, Mexico, Singapore, South Korea, the Netherlands, the United Kingdom, and the United States. With each team preparing for several runs over three days, the atmosphere was by turns frantic and focused as team members moved around the venue and worked to repair or improve their robots. Major academic research labs set up next to small robotics startups, with each team hoping their unique approach would triumph.

The Avatar XPrize course was designed to look like a science station on an alien planet, and the avatar systems had to complete tasks that included using tools and identifying rock samples.XPrize Foundation

The Avatar XPrize course was designed to look like a science station on an alien planet, and the avatar systems had to complete tasks that included using tools and identifying rock samples.XPrize Foundation

The competition course included a series of tasks that each robot had to perform, based around a science mission on the surface of an alien planet. Completing the course involved communicating with a human mission commander, flipping an electrical switch, moving through an obstacle course, identifying a container by weight and manipulating it, using a power drill, and finally, using touch to categorize a rock sample. Teams were ranked by the amount of time their avatar system took to successfully finish all tasks.

There are two fundamental aspects to an avatar system. The first is the robotic mobile manipulator that the human operator controls. The second is the interface that allows the operator to provide that control, and this is arguably the more difficult part of the system. In previous robotics competitions, like the DARPA Robotics Challenge and the DARPA Subterranean Challenge, the interface was generally based around a traditional computer (or multiple computers) with a keyboard and mouse, and the highly specialized job of operator required an immense amount of training and experience. This approach is not accessible or scalable, however. The competition in Long Beach thus featured avatar systems that were essentially operator-agnostic, so that anyone could effectively use them.

XPrize judge Justin Manley celebrates with NimbRo’s avatar robot after completing the course.Evan Ackerman

XPrize judge Justin Manley celebrates with NimbRo’s avatar robot after completing the course.Evan Ackerman

“Ultimately, the general public will be the end user,” explains Locke. “This competition forced teams to invest time into researching and improving the operator-experience component of the technology. They had to open their technology and labs to general users who could operate and provide feedback on the experience, and the teams who scored highest also had the most intuitive and user-friendly operating interfaces.”

During the competition, team members weren’t allowed to operate their own robots. Instead, a judge was assigned to each team, and the team had 45 minutes to train the judge on the robot and interface. The judges included experts in robotics, virtual reality, human-computer interaction, and neuroscience, but none of them had previous experience as an avatar operator.

Northeastern team member David Nguyen watches XPrize judge Peggy Wu operate the avatar system during a competition run. XPrize Foundation

Northeastern team member David Nguyen watches XPrize judge Peggy Wu operate the avatar system during a competition run. XPrize Foundation

Once the training was complete, the judge used the team’s interface to operate the robot through the course, while the team could do nothing but sit and watch. Two team members were allowed to remain with the judge in case of technical problems, and a live stream of the operator room captured the stress and helplessness that teams were under: After years of work and with millions of dollars at stake, it was up to a stranger they’d met an hour before to pilot their system to victory. It didn’t always go well, and occasionally it went very badly, as when a bipedal robot collided with the edge of a doorway on the course during a competition run and crashed to the ground, suffering damage that was ultimately unfixable.

Hardware and humans

The diversity of the teams was reflected in the diversity of their avatar systems. The competition imposed some basic design requirements for the robot, including mobility, manipulation, and a communication interface, but otherwise it was up to each team to design and implement their own hardware and software. Most teams favored a wheeled base with two robotic arms and a head consisting of a screen for displaying the operator’s face. A few daring teams brought bipedal humanoid robots. Stereo cameras were commonly used to provide visual and depth information to the operator, and some teams included additional sensors to convey other types of information about the remote environment.

For example, in the final competition task, the operator needed the equivalent of a sense of touch in order to differentiate a rough rock from a smooth one. While touch sensors for robots are common, translating the data that they collect into something readable by humans is not straightforward. Some teams opted for highly complex (and expensive) microfluidic gloves that transmit touch sensations from the fingertips of the robot to the fingertips of the operator. Other teams used small, finger-mounted vibrating motors to translate roughness into haptic feedback that the operator could feel. Another approach was to mount microphones on the robot’s fingers. As its fingers moved over different surfaces, rough surfaces sounded louder to the operator while smooth surfaces sounded softer.

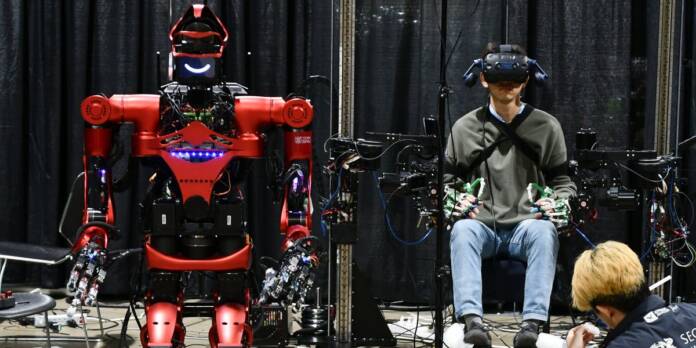

![]() Many teams, including i-Botics [left], relied on commercial virtual-reality headsets as part of their interfaces. Avatar interfaces were made as immersive as possible to help operators control their robots effectively.Left: Evan Ackerman; Right: XPrize Foundation

Many teams, including i-Botics [left], relied on commercial virtual-reality headsets as part of their interfaces. Avatar interfaces were made as immersive as possible to help operators control their robots effectively.Left: Evan Ackerman; Right: XPrize Foundation

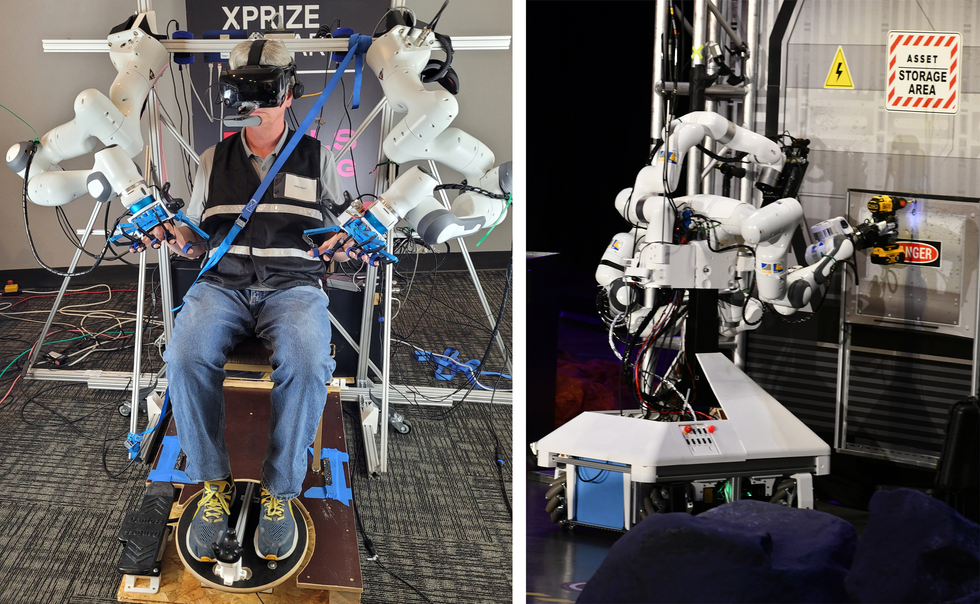

In addition to perceiving the remote environment, the operator had to efficiently and effectively control the robot. A basic control interface might be a mouse and keyboard, or a game controller. But with many degrees of freedom to control, limited operator training time, and a competition judged on speed, teams had to get creative. Some teams used motion-detecting virtual-reality systems to transfer the motion of the operator to the avatar robot. Other teams favored a physical interface, strapping the operator into hardware (almost like a robotic exoskeleton) that could read their motions and then actuate the limbs of the avatar robot to match, while simultaneously providing force feedback. With the operator’s arms and hands busy with manipulation, the robot’s movement across the floor was typically controlled with foot pedals.

Northeastern’s robot moves through the course.Evan Ackerman

Northeastern’s robot moves through the course.Evan Ackerman

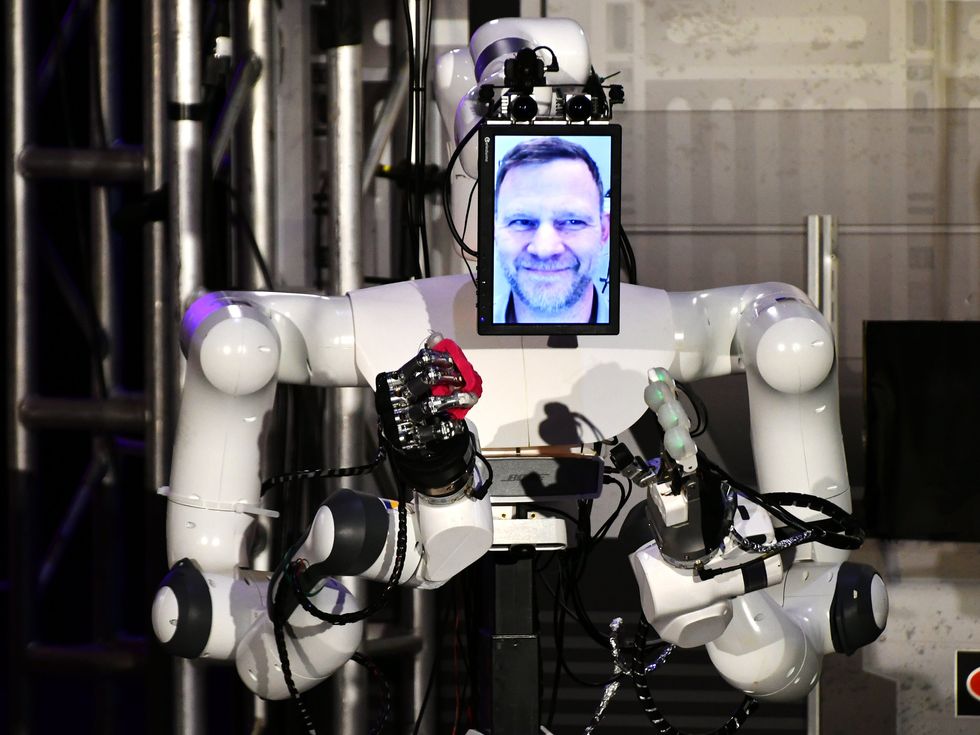

Another challenge of the XPrize competition was how to use the avatar robot to communicate with a remote human. Teams were judged on how natural such communication was, which precluded using text-only or voice-only interfaces; instead, teams had to give their robot some kind of expressive face. This was easy enough for operator interfaces that used screens; a webcam that was pointed at the operator and streamed to display on the robot worked well.

But for interfaces that used VR headsets, where the operator’s face was partially obscured, teams had to find other solutions. Some teams used in-headset eye tracking and speech recognition to map the operator’s voice and facial movements onto an animated face. Other teams dynamically warped a real image of the user’s face to reflect their eye and mouth movements. The interaction wasn’t seamless, but it was surprisingly effective.

Human form or human function?

Team iCub, from the Istituto Italiano di Tecnologia, believed its bipedal avatar was the most intuitive way to transfer natural human motion to a robot.Evan Ackerman

Team iCub, from the Istituto Italiano di Tecnologia, believed its bipedal avatar was the most intuitive way to transfer natural human motion to a robot.Evan Ackerman

With robotics competitions like the Avatar XPrize, there is an inherent conflict between the broader goal of generalized solutions for real-world problems and the focused objective of the competing teams, which is simply to win. Winning doesn’t necessarily lead to a solution to the problem that the competition is trying to solve. XPrize may have wanted to foster the creation of “avatar system[s] that can transport human presence to a remote location in real time,” but the winning team was the one that most efficiently completed the very specific set of competition tasks.

For example, Team iCub, from the Istituto Italiano di Tecnologia (IIT) in Genoa, Italy, believed that the best way to transport human presence to a remote location was to embody that human as closely as possible. To that end, IIT’s avatar system consisted of a small bipedal humanoid robot—the 100-centimeter-tall iCub. Getting a bipedal robot to walk reliably is a challenge, especially when that robot is under the direct control of an inexperienced human. But even under ideal conditions, there was simply no way that iCub could move as quickly as its wheeled competitors.

XPrize decided against a course that would have rewarded humanlike robots—there were no stairs on the course, for example—which prompts the question of what “human presence” actually means. If it means being able to go wherever able-bodied humans can go, then legs might be necessary. If it means accepting that robots (and some humans) have mobility limitations and consequently focusing on other aspects of the avatar experience, then perhaps legs are optional. Whatever the intent of XPrize may have been, the course itself ultimately dictated what made for a successful avatar for the purposes of the competition.

Avatar optimization

Unsurprisingly, the teams that focused on the competition and optimized their avatar systems accordingly tended to perform well. Team Northeastern won third place and $1 million using a hydrostatic force-feedback interface for the operator. The interface was based on a system of fluidic actuators first conceptualized a decade ago at Disney Research.

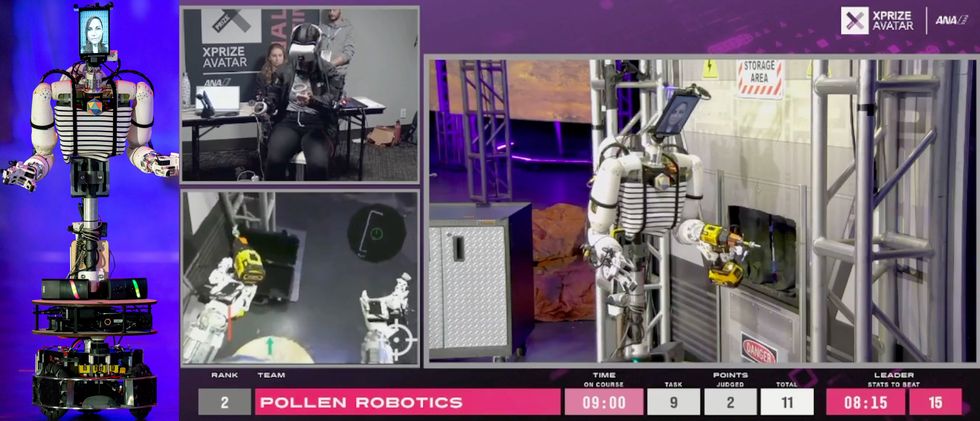

Second place went to Team Pollen Robotics, a French startup. Their robot, Reachy, is based on Pollen Robotics’ commercially available mobile manipulator, and it was likely one of the most affordable systems in the competition, costing a mere €20,000 (US $22,000). It used primarily 3D-printed components and an open-source design. Reachy was an exception to the strategy of optimization, because it’s intended to be a generalizable platform for real-world manipulation. But the team’s relatively simple approach helped them win the $2 million second-place prize.

In first place, completing the entire course in under 6 minutes with a perfect score, was Team NimbRo, from the University of Bonn, in Germany. NimbRo has a long history of robotics competitions; they participated in the DARPA Robotics Challenge in 2015 and have been involved in the international RoboCup competition since 2005. But the Avatar XPrize allowed them to focus on new ways of combining human intelligence with robot-control systems. “When I watch human intelligence operating a machine, I find that fascinating,” team lead Sven Behnke told IEEE Spectrum. “A human can see deviations from how they are expecting the machine to behave, and then can resolve those deviations with creativity.”

Team NimbRo’s system relied heavily on the human operator’s own senses and knowledge. “We try to take advantage of human cognitive capabilities as much as possible,” explains Behnke. “For example, our system doesn’t use sensors to estimate depth. It simply relies on the visual cortex of the operator, since humans have evolved to do this in tremendously efficient ways.” To that end, NimbRo’s robot had an unusually long and flexible neck that followed the motions of the operator’s head. During the competition, the robot’s head could be seen shifting from side to side as the operator used parallax to understand how far away objects were. It worked quite well, although NimbRo had to implement a special rendering technique to minimize latency between the operator’s head motions and the video feed from the robot, so that the operator didn’t get motion sickness.

XPrize judge Jerry Pratt [left] operates NimbRo’s robot on the course [right]. The drill task was particularly difficult, involving lifting a heavy object and manipulating it with high precision. Left: Team NimbRo; Right: Evan Ackerman

XPrize judge Jerry Pratt [left] operates NimbRo’s robot on the course [right]. The drill task was particularly difficult, involving lifting a heavy object and manipulating it with high precision. Left: Team NimbRo; Right: Evan Ackerman

The team also put a lot of effort into making sure that using the robot to manipulate objects was as intuitive as possible. The operator’s arms were directly attached to robotic arms, which were duplicates of the arms on the avatar robot. This meant that any arm motions made by the operator would be mirrored by the robot, yielding a very consistent experience for the operator.

The future of hybrid autonomy

The operator judge for Team NimbRo’s winning run was Jerry Pratt, who spent decades as a robotics professor at the Florida Institute for Human and Machine Cognition before joining humanoid robotics startup Figure last year. Pratt had led Team IHMC (and a Boston Dynamics Atlas robot) to a second-place finish at the DARPA Robotics Challenge Finals in 2015. “I found it incredible that you can learn how to use these systems in 60 minutes,” Pratt said of his XPrize run. “And operating them is super fun!” Pratt’s winning time of 5:50 to complete the Avatar XPrize course was not much slower than human speed.

At the 2015 DARPA Robotics Challenge finals, by contrast, the Atlas robot had to be painstakingly piloted through the course by a team of experts. It took that robot 50 minutes to complete the course, which a human could have finished in about 5 minutes. “Trying to pick up things with a joystick and mouse [during the DARPA competition] is just really slow,” Pratt says. “Nothing beats being able to just go, ‘Oh, that’s an object, let me grab it’ with full telepresence. You just do it.”

Team Pollen’s robot [left] had a relatively simple operator interface [middle], but that may have been an asset during the competition [right].Pollen Robotics

Team Pollen’s robot [left] had a relatively simple operator interface [middle], but that may have been an asset during the competition [right].Pollen Robotics

Both Pratt and NimbRo’s Behnke see humans as a critical component of robots operating in the unstructured environments of the real world, at least in the short term. “You need humans for high-level decision making,” says Pratt. “As soon as there’s something novel, or something goes wrong, you need human cognition in the world. And that’s why you need telepresence.”

Behnke agrees. He hopes that what his group has learned from the Avatar XPrize competition will lead to hybrid autonomy through telepresence, in which robots are autonomous most of the time but humans can use telepresence to help the robots when they get stuck. This approach is already being implemented in simpler contexts, like sidewalk delivery robots, but not yet in the kind of complex human-in-the-loop manipulation that Behnke’s system is capable of.

“Step by step, my objective is to take the human out of that loop so that one operator can control maybe 10 robots, which would be autonomous most of the time,” Behnke says. “And as these 10 systems operate, we get more data from which we can learn, and then maybe one operator will be responsible for 100 robots, and then 1,000 robots. We’re using telepresence to learn how to do autonomy better.”

The entire Avatar XPrize event is available to watch through this live stream recording on YouTube.www.youtube.com

While the Avatar XPrize final competition was based around a space-exploration scenario, Behnke is more interested in applications in which a telepresence-mediated human touch might be even more valuable, such as personal assistance. Behnke’s group has already demonstrated how their avatar system could be used to help someone with an injured arm measure their blood pressure and put on a coat. These sound like simple tasks, but they involve exactly the kind of human interaction and creative manipulation that is exceptionally difficult for a robot on its own. Immersive telepresence makes these tasks almost trivial, and accessible to just about any human with a little training—which is what the Avatar XPrize was trying to achieve.

Still, it’s hard to know how scalable these technologies are. At the moment, avatar systems are fragile and expensive. Historically, there has been a gap of about five to 10 years between high-profile robotics competitions and the arrival of the resulting technology—such as autonomous cars and humanoid robots—at a useful place outside the lab. It’s possible that autonomy will advance quickly enough that the impact of avatar robots will be somewhat reduced for common tasks in structured environments. But it’s hard to imagine that autonomous systems will ever achieve human levels of intuition or creativity. That is, there will continue to be a need for avatars for the foreseeable future. And if these teams can leverage the lessons they’ve learned over the four years of the Avatar XPrize competition to pull this technology out of the research phase, their systems could bypass the constraints of autonomy through human cleverness, bringing us useful robots that are helpful in our daily lives.

This article appears in the May 2023 print issue as “HumanintheLoop.”

From Your Site Articles

Related Articles Around the Web